Why Your Essay Gets Flagged by Turnitin in 2026

AI detectors like Turnitin are flagging more student essays than ever in 2026, including human-written ones. False positive rates are rising, university policies are shifting, and most students are caught off guard. Learn how AI detection actually works, why your writing gets flagged, and how using the right humanizer to humanize ChatGPT output can protect your grade before you hit submit.

Let's be honest. You spent three hours writing that essay. You did the research, you cited your sources, and you even read it back to yourself out loud. Then Turnitin flagged it as 40% AI-generated. You didn't cheat. So why does the tool think you did?

This is the reality for thousands of students right now, and it's getting worse. AI detectors have become more aggressive, university policies are shifting fast, and most students have no idea how any of it actually works. This post breaks down exactly what's happening in 2026, why false positives are a real and documented problem, and what tools actually help.

The AI Detection Arms Race Is Escalating in 2026

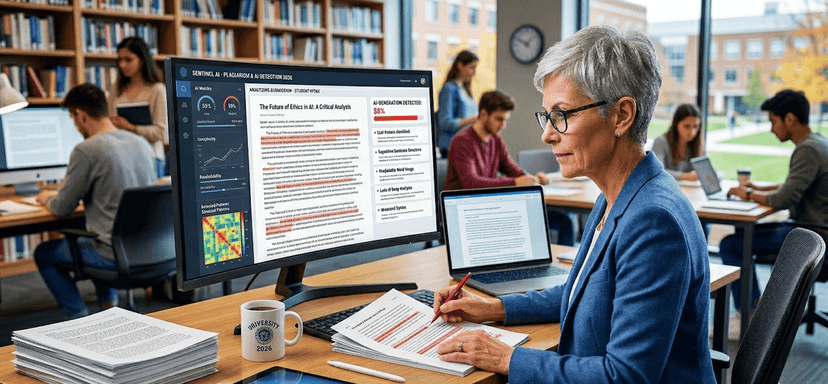

The landscape has changed significantly over the past year. Turnitin, GPTZero, Originality.ai, and Copyleaks have all rolled out updated detection models that are trained on much larger datasets and flag AI-generated writing with more confidence than ever before.

Here is the number that should tell you everything: Turnitin data released in February 2026 showed that roughly 15% of essay submissions now contain over 80% AI-generated writing. Back in April 2023, when Turnitin first launched its AI detector, that number was just 3%. Student use of AI writing tools has exploded, and the detectors are trying to keep up.

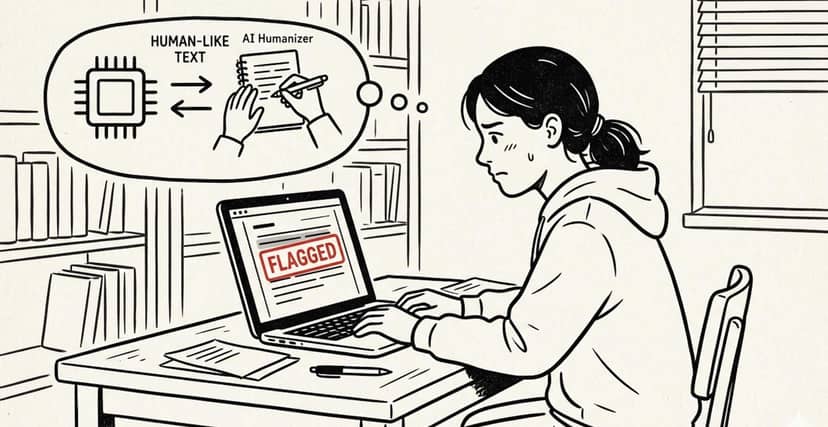

GPTZero's latest model claims a 91% accuracy rate on pure AI text. Turnitin has gone even further, updating its system specifically to detect AI humanizer tools, meaning software that rewrites AI content to look more human. The detectors are no longer just catching raw ChatGPT output. They're trying to catch the tools students use to clean it up.

That creates a significant problem for students who are writing their own work, because tighter detection thresholds mean more innocent essays get caught in the net.

The False Positive Problem Nobody Is Talking About

Here is what a lot of students do not realize. Turnitin does not actually tell your professor that you used AI. It gives a probability score. A percentage. And that score can be wrong.

Independent research from 2024 and 2025 found that false positive rates on non-native English writing, heavily edited drafts, and technical academic prose can reach between 5% and 12%. That is not a small margin. For students writing in their second language, or students who just happen to write in a clear, structured style, the risk of being wrongly flagged is real.

The situation got so bad at one major institution that Vanderbilt University publicly disabled Turnitin's AI detection feature after concluding that its false positive rate in real-world use was unacceptably high. That is a significant statement from one of the most respected universities in the US.

The irony is painful. Students who use grammar tools like Grammarly or write in a formal academic style are sometimes more likely to get flagged than students who write loosely. Clean sentence structure and consistent transitions, which are exactly what students are taught to aim for, can look like AI patterns to a detection model.

How Universities Are Actually Responding

This is important context if you're anxious about a flag on your paper.

In 2026, many universities are pulling back from zero-tolerance AI policies and moving toward something more nuanced. The shift is toward transparency. Professors are increasingly told to treat an AI detection score as the start of a conversation, not as evidence of wrongdoing.

Turnitin itself is explicit about this. The company states that AI scores are indicators and not verdicts. Most institutions set a trigger threshold somewhere between 15% and 40%, and even above that threshold, professors are expected to use their judgment, consider the student's writing history, and factor in the context of the assignment.

A flag does not mean you're in trouble. But it does mean you need to be aware of how your writing looks to these systems, and ideally get ahead of the problem before you submit.

How AI Detection Actually Works (The Part They Don't Teach You)

To understand why humanizers work, you need to understand what detectors are actually measuring. It comes down to two core signals.

The first is perplexity, which is a measure of how predictable your word choices are. AI models tend to pick the statistically expected word more often than a human would. Human writers surprise you. They take detours. They use phrases that are slightly unexpected, which pushes perplexity up.

The second is burstiness, which refers to variation in sentence length. Humans naturally write some very short sentences. Then sometimes longer, more complex sentences that build on the previous idea. AI tends to produce sentences of very similar length, which creates a flat rhythm that detectors look for.

Turnitin also uses sentence-level analysis, looking at overlapping windows of text for patterns in syntax, transitions, and word choice. Critically, its model is updated quarterly. A method that worked six months ago might not work today.

This is why simple paraphrasing tools fail. Swapping synonyms does not change burstiness or perplexity in any meaningful way. To actually move those signals, you need deeper structural rewriting.

What Actually Works: Choosing the Right Humanizer in 2026

The humanizer market has gotten crowded and a lot of the tools are genuinely bad. One rigorous test involving 200 text samples per tool, run across five detectors and repeated three times, found that out of 12 AI humanizer tools tested, only three could bypass all AI detectors at 90% accuracy or above. The rest failed, often badly.

What separates the tools that work from the ones that don't is depth of processing. Weak tools do synonym substitution. Strong tools work at the paragraph and document level, restructuring arguments and rewriting transitions to match the statistical patterns of real human writing.

If you use ChatGPT to draft content and want to clean it up before submission, the process of

learning how to properly humanize ChatGPT output is not about tricking a system. It is about making your AI-assisted draft genuinely reflect your own voice, your own structure, and your own reasoning. That is what a good humanizer actually does.

A few things to look for when choosing a tool:

- It should support different writing modes, academic, professional, and casual, so the output matches your actual context

- It should not just reword your sentences but actually vary rhythm and structure

- It should let you check your result against multiple detectors before you submit

- It should preserve your original meaning and citations, not rewrite your argument

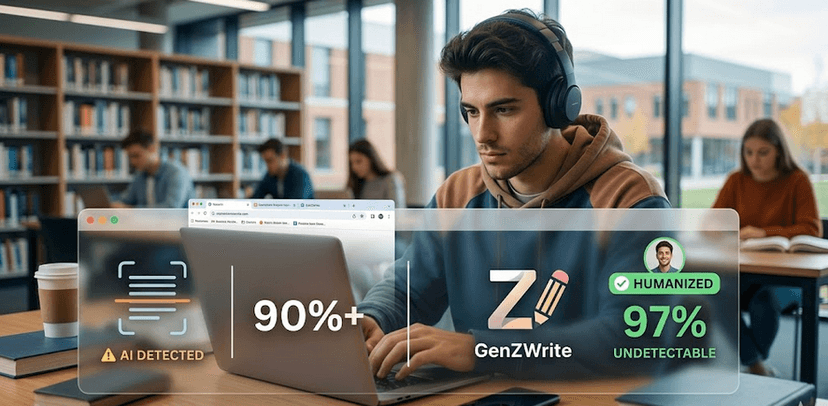

GenZWrite's Approach: Built for How Students Actually Write

GenZWrite was built specifically around the pain points above. The platform uses three writing modes tailored to different student needs: Academic Mode for essays and research papers, Social Mode for content creation and informal writing, and Side Hustle Mode for professional documents like resumes and cover letters.

Each mode works differently because the detection patterns Turnitin looks for in an academic essay are different from what GPTZero flags in a blog post. A general-purpose rewriter does not account for that distinction. GenZWrite does.

The platform also includes a built-in AI detector, which means you can check your score before you submit, not after. You run the text through the

The platform also includes a built-in AI detector so you can check your score before submitting. Run your text through the humanizer, see your updated score, and iterate until you're confident. That feedback loop is the thing most students are missing when they get blindsided by a Turnitin flag.

The Honest Bottom Line for Students

AI detectors are getting better, but they are still not perfect. False positives are a documented, real problem that even universities are acknowledging. Blanket bans are giving way to nuanced policies. And the tools available to students for managing their AI-assisted writing have improved substantially.

The students who get caught off guard are the ones who either submit raw AI output without any editing, or who use weak paraphrasing tools that do not actually change the signals detectors measure. The students who stay ahead of the problem understand how detection works, choose tools built for the job, and treat humanization as a writing process, not a cheat code.

If you are writing in 2026, you are writing in a world where AI is part of the process. The question is not whether to use it. The question is how to use it well, and how to make sure your final submission actually represents your thinking, not a model's defaults.

That is exactly the problem GenZWrite was built to solve.

Frequently Asked Questions

Does Turnitin flag human-written essays as AI in 2026?

Yes. False positive rates on non-native English writing and formal academic prose have been documented at between 5% and 12% in independent studies. Vanderbilt University disabled Turnitin's AI detection feature partly for this reason.

What does Turnitin actually detect?

Turnitin uses a sentence-level classification model that measures perplexity (word predictability) and burstiness (sentence length variation). It is updated quarterly to account for new AI models and humanizer tools.

Can a humanizer actually help with Turnitin?

Yes, but only if it does genuine structural rewriting. Tools that only swap synonyms typically fail. The best tools in 2026 work at the paragraph level, restructuring rhythm and transitions to match human writing patterns.

Is using an AI humanizer cheating?

That depends entirely on your institution's policy. In schools where AI assistance is permitted, using a humanizer to improve clarity and naturalness is a writing tool like any other. Always check your school's specific policy before submitting.

How do I check my essay before submitting to Turnitin?

Since Turnitin is only available through your institution, use GPTZero, Originality.ai, or a platform like GenZWrite that includes a built-in detector. Cross-checking across multiple tools gives you the best indication of how your submission will perform.

Rita Jamal

AI Content Specialist

Relateted Articles

Why Students Are Searching for an AI Humanizer More Than Ever During Finals Season

Finals stress now comes with a new threat: AI detectors flagging honest student essays. With false positives hitting non-native speakers hardest and real academic consequences on the line, students are turning to AI humanizers to protect their work. Here is what they actually need from these tools in 2026.

Rita Jamal

AI Content Specialist

How Professors Spot AI Essays in 2026

Teachers in 2026 are spotting AI-generated essays faster than ever — often before detectors even confirm it. Learn the biggest red flags educators notice, why students get flagged, and how tools like GenZWrite help humanize essay writing naturally.

Rita Jamal

AI Content Specialist

Turnitin-Proof Your GPA: How to Bypass AI Detection (97% Accuracy)

Is Turnitin flagging your hard work? In 2026, basic text spinners no longer work. This guide reveals how the GenZWrite AI Humanizer uses semantic reconstruction to achieve a 97% bypass score, helping students humanize essays and submit with total confidence.

Rita Jamal

AI Content Specialist

Humanize & Detect AI

Transform robotic AI text into natural, engaging content that passes detection and ranks higher.